-

My Perspective on The Potential Power of Generative AI

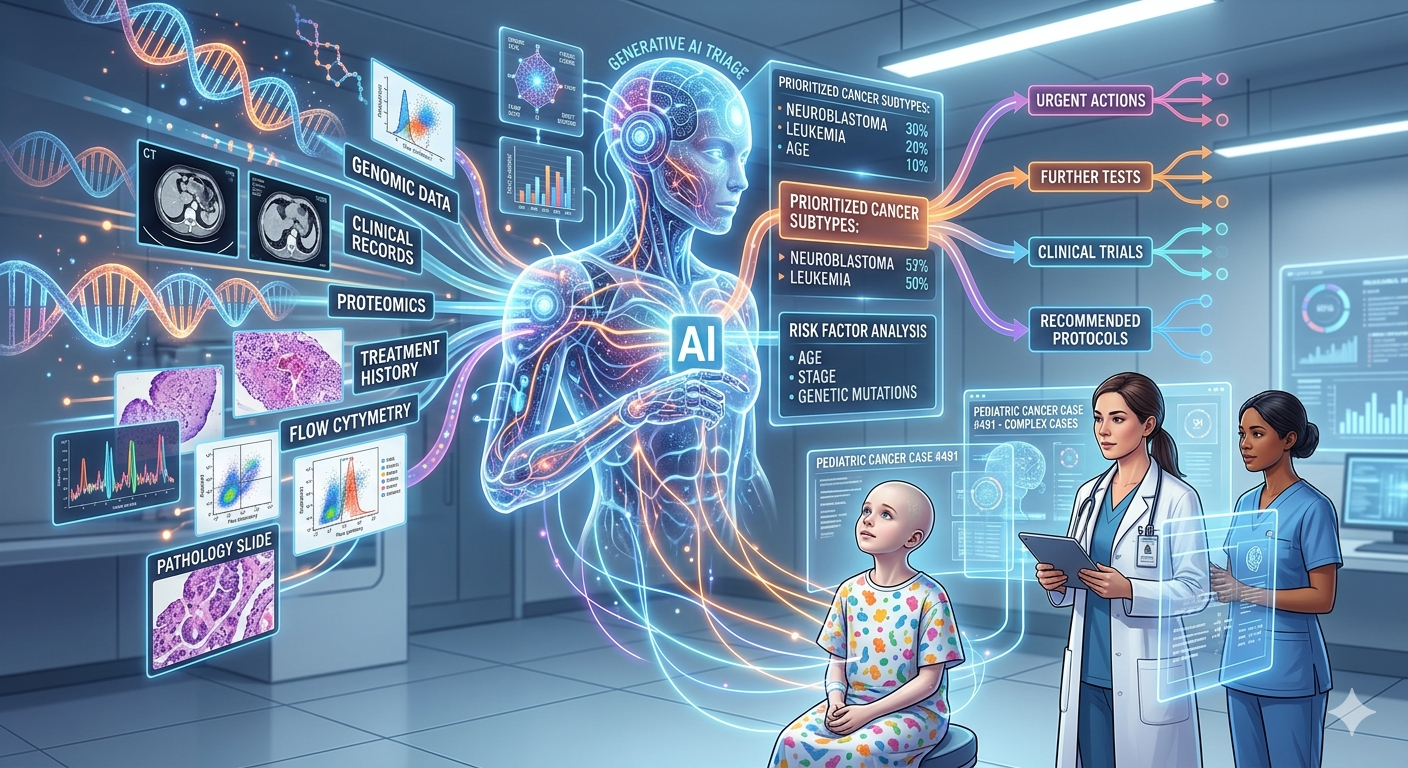

In April 2025, I presented on the potential of Generative AI in diagnosing health conditions, reflecting on my son Toby’s fibrolamellar carcinoma case. I argue that if this technology had been available earlier, it might have improved Toby’s chances of survival. The post discusses ongoing advancements and limitations of Generative AI in healthcare diagnostics.

-

ChatGPT: The Future, Like It Or Not

A discussion on LLMs, their potential, limitations, and real-world applications in fields like medicine, law, and design, and a personal story about AI’s diagnostic capabilities.

-

How to use a RACI table and formula queries to control table access in Quickbase apps.

How to use RACI table records and formula queries to control table record access in Quickbase apps.